I love the City to Surf. Being a fun run, it is not only a lot of fun, but also a great leveler.

Every year, I am overtaken by someone who has the look of someone I should be beating; and sometimes I even overtake a few who look like they ought to be beating me!

I especially enjoy this aspect of fun runs: they remind me that there is more to everyone than the things I can see.

For a few reasons, this year’s event was special to me; and as I have a bit of time, and the inclination to try some new tricks, I figured that I would post a few charts.

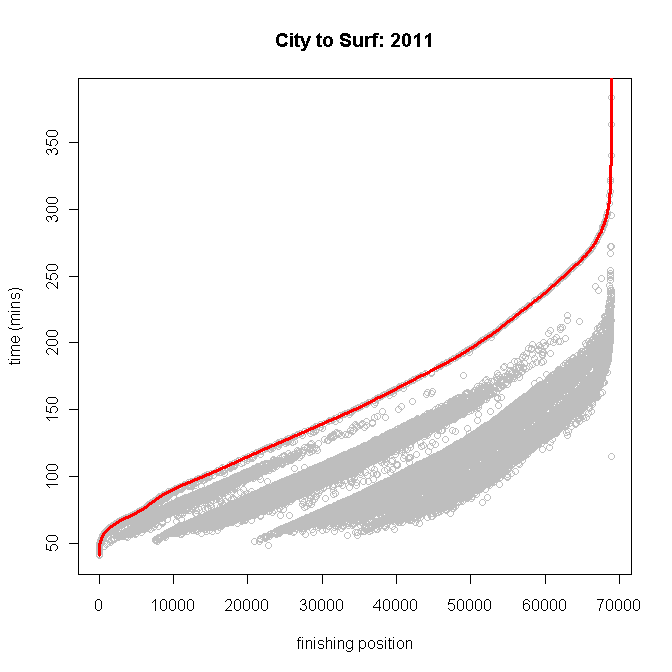

First up, here is a point-chart of times by finishing position. Note how the staggered starting waves create tide lines in the finishing times and ranks — there are clearly some fast runners in the back of the pack, and some slow ones in the front groups.

First up, here is a point-chart of times by finishing position. Note how the staggered starting waves create tide lines in the finishing times and ranks — there are clearly some fast runners in the back of the pack, and some slow ones in the front groups.

The grey points are net-time (from your bib-tag), and the red line at the top is gross time (time on the official clock). Thus, if you finished (say) 30,000th your personal time is a grey point (at 1hr 13m 04s), and the official city-to-surf clock read 2hr 19m 27s when you crossed the line.

Men are pretty competitive from 16 to 39, but after that we tend to slow up a little. The fastest runner this year was the 20-29 division, however the 30-39 group outperforms on average.

Men are pretty competitive from 16 to 39, but after that we tend to slow up a little. The fastest runner this year was the 20-29 division, however the 30-39 group outperforms on average.

I am surprised to see how much variation there is in Women’s results. Performance improves markedly as women move into the 20-29 division, is steady in the 30-39 division and then falls away similarly sharply thereafter.

I am surprised to see how much variation there is in Women’s results. Performance improves markedly as women move into the 20-29 division, is steady in the 30-39 division and then falls away similarly sharply thereafter.

As in the male case, the fastest women was in the 20-29 division, and the fastest group was the 30-39 division.

Women’s ranges are notably wider than Male ranges. At least in part, I put this down to the prams I see so many super-mums pushing up heart-break hill. That’s love…

How did I do? I ran 70m 01s, which was 1s over my target, and 24s shy of the cut-off for the top quartiile of my division (M30-39 Q1 69m 37s, median 79m 35s).

Next year I aim to run sub-60.

______________________________________

technical stuff

I scraped the data from the city-to-surf website using the below Python script. This is my first attempt at Python code, so please comment with suggested improvements.

There are two bugs in the code of which I am aware — please help me fix them?

First, when a name string has unusual characters (Scandinavian names, for example) it crashes. Second, when there are missing strings (division was sometimes missing – I suppose some folks like to conceal their age) there is no ‘a’ tag, and so the parsing gets all mixed up, which eventually causes a crash when the for loop tries to ref a string that doesn’t exist on the page.

## RA_C2S_2011.py ##

import socket

socket.setdefaulttimeout(60)

import urllib2

from os.path import join as pjoin

from BeautifulSoup import BeautifulSoup

# create a file to put the data into

filename = “c2s-2011_1.txt”

path_to_file = pjoin(“C:\\”, “py”, “oput”, “c2s”, filename)

f = open(path_to_file, “w”)

for i in range(1, 86695, 25):

page = urllib2.urlopen(“http://tiktok.biz/list.asp?a=1&EventID=city2surf&Edition=2011&RaceID=c2s&nDBFirst=” +str(i))

soup = BeautifulSoup(page)

tags = soup.findAll(“td”, attrs={“class” : “gridTable-dat”})

div_tags = soup.findAll(‘a’, attrs={“class”:”gridDatLink”})

for n in range(0,25,1):

bib = tags[1+n*11].string

name = tags[2+n*11].string

ftime = tags[3+n*11].string

ntime = tags[4+n*11].string

posi = tags[5+n*11].string

gend = div_tags[2*n].string

div = div_tags[1+2*n].string

f.write(posi + ‘;’ + ftime + ‘;’ + ntime + ‘;’ + bib + ‘;’ + name + ‘;’ + gend + ‘;’ + div + ‘\n’)

1. It’s most probably due to character-encoding mismatch between the page and BeautifulSoup, do you have an example of a result page where that is happening?

2. Your code assumes that all tags always happen, but you’ll need to check the size of the returned tags and div_tags to avoid pointing to non existing tags.